LLM-based artificial intelligence (e.g. Gemini in Google Cloud) has exploded in adoption, but companies in the real economy, such as manufacturing, distribution and B2B have discovered a fundamental limitation: pure neural networks are probabilistic. They "guess" answers, can hallucinate, recommend technically incompatible products, or ignore commercial margins.

To solve this problem, many companies in the U.S. have not abandoned artificial intelligence and instead, they have developed a fundamental concept: Hybrid AI Architecture.

In the recent Guide #1: Intelligent Recommendations, Upsell and Rules, OPTI Software detailed how this approach is the only viable path for implementing AI in B2B commerce. In this article, we explore the theory behind hybrid architecture and how we adapt it for the local midmarket from data protection to on-premise solutions. Plus: the future of configurative bill of materials (BOM) generation.

1. From "Black Box" to Neuro-Symbolic AI

The concept of neuro-symbolic artificial intelligence (Neuro-Symbolic AI) is far from new. Its theoretical roots date back to the 1990s, when researchers such as Jude Shavlik, Henry Kautz, and Artur d'Avila Garcez explored the limitations of systems based purely on statistics and probabilities.

The neuro-symbolic theory from Silicon Valley unifies two historically distinct schools of thought:

- Symbol- and rule-based AI (Good Old-Fashioned AI - GOFAI): Uses strict logic, IF-THEN rules, and decision trees. It is 100% explainable and precise, but nearly impossible to scale when data is unstructured or comes in massive volumes.

- Connection-based AI (Deep Learning / Neural Networks): Excels at pattern recognition across huge datasets, learning from prior examples. Its limitation is that it operates as a black box, it lacks full explainability and cannot enforce strict rules, which is where hallucinations arise.

The ideal hybrid architecture combines both. The theory is that we use neural networks for associations and pattern identification (e.g. inferring a customer's intent solely from their rich browsing history), while verifying results through a symbolic engine (rules) that validates the final decision.

Legend: Safety architecture diagram in a custom implementation See in guide

The hybrid architecture promoted by OPTI Software is built on validating AI results through the company's own deterministic rules. The goal is for artificial intelligence and AI agents to be explainable, measurable, and safe for the business environment.

2. The Data Problem: How to Protect Trade Secrets with a Local (On-Premise) Middleware

B2B companies want to avoid exposing confidential data (negotiated price lists, strategic stock levels, customer data) to external systems, and to ensure that AI does not learn from their proprietary data. There is a "privacy-first" approach, in which a critical component runs on-premise or in a private cloud environment:

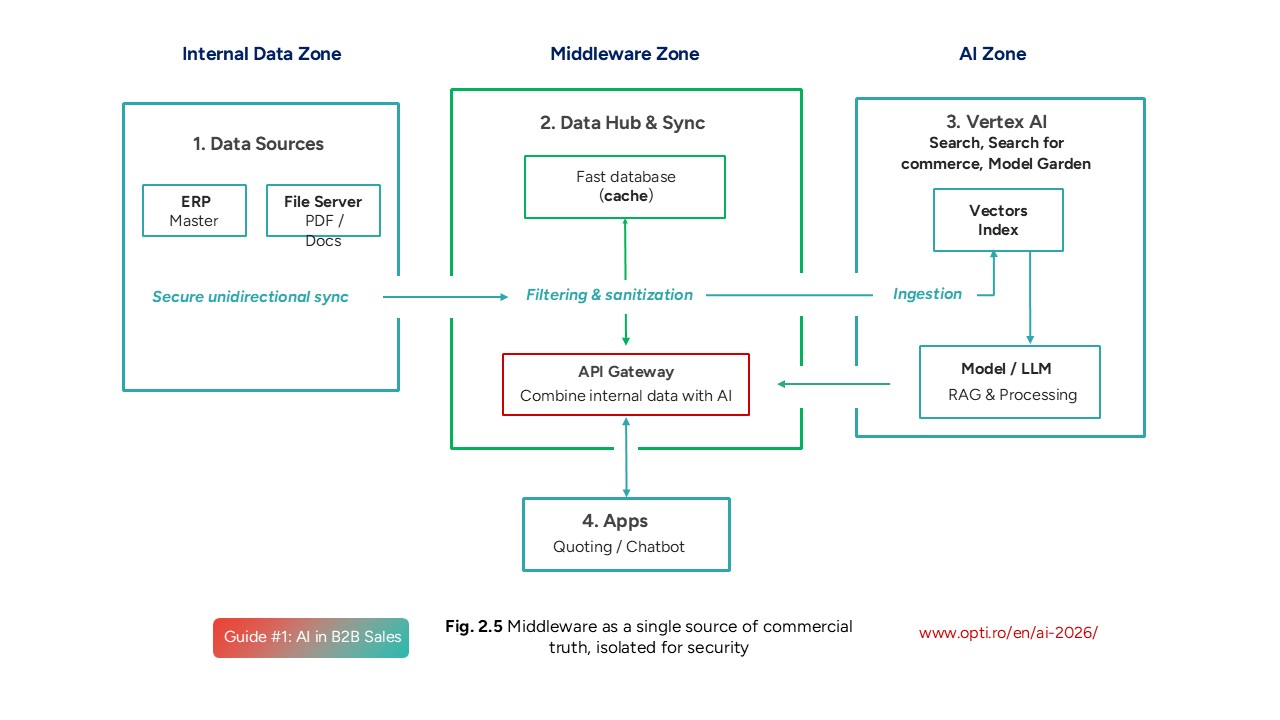

Legend: Middleware as a single source of commercial truth, isolated for security. See in guide

The essence of the privacy-first approach:

- Core management systems (ERP, WMS) are not modified and do not communicate directly with artificial intelligence.

- A centralised middleware is built and it acts as an isolated shield.

- The middleware processes data and applies pseudo-anonymisation (e.g. hashing customer IDs) before any information is sent to the cloud.

- The middleware keeps pricing rules and real stock levels on-premise. The AI model in the cloud returns only the recommended product IDs (the Retrieval and Scoring phase), and the local middleware immediately enriches them with prices specific to the logged-in customer and verifies availability (stock).

Sensitive data never reaches the AI model in the cloud, ensuring compliance with NIS2 and GDPR regulations while keeping full control within the company.

3. The Speed of Innovation: AI in the Cloud or Running a Local LLM?

Many IT teams also evaluate the option of using open-source models (e.g. Llama, Mistral) by running a local LLM on their own servers and allocating server resources for this purpose. While it may seem to offer total control, this approach carries costs and scalability limitations that must be considered. Here is a comparison:

Legend: Comparison table between Build / Custom vs Managed / Cloud Native

See the full comparison in the guide

Why do we recommend cloud services like Google's Vertex AI, combined with company data protection?

- Speed of technological evolution: Cloud algorithms evolve at a pace impossible for an in-house team to match. Pre-trained models benefit from continuous updates to the underlying architecture. If a company deploys a local LLM today, within 6 months it becomes outdated (legacy) and requires a dedicated team for permanent maintenance.

- Hidden costs and latency: Running predictive or generative models locally requires hardware infrastructure (GPU clusters, Kubernetes). Scalable recommendation systems also demand strict latency requirements (even milliseconds) , something difficult to achieve with an unoptimised local LLM.

- Budget efficiency: With a cloud API, the company pays strictly for usage (pay-as-you-go) and offloads maintenance complexity to Google (or another cloud provider), allowing focus on data quality, data protection (e.g. privacy-first), and defining the business rules that guarantee results.

4. The Near Future: Composite AI for Complex Quotes (BOM)

As we approach the 2027 horizon covered extensively in the guide, the boundary between search, recommendation, and quoting is blurring.

The ultimate solution for distributors of technical equipment (HVAC, electrical, construction materials) is what is known as Composite AI.

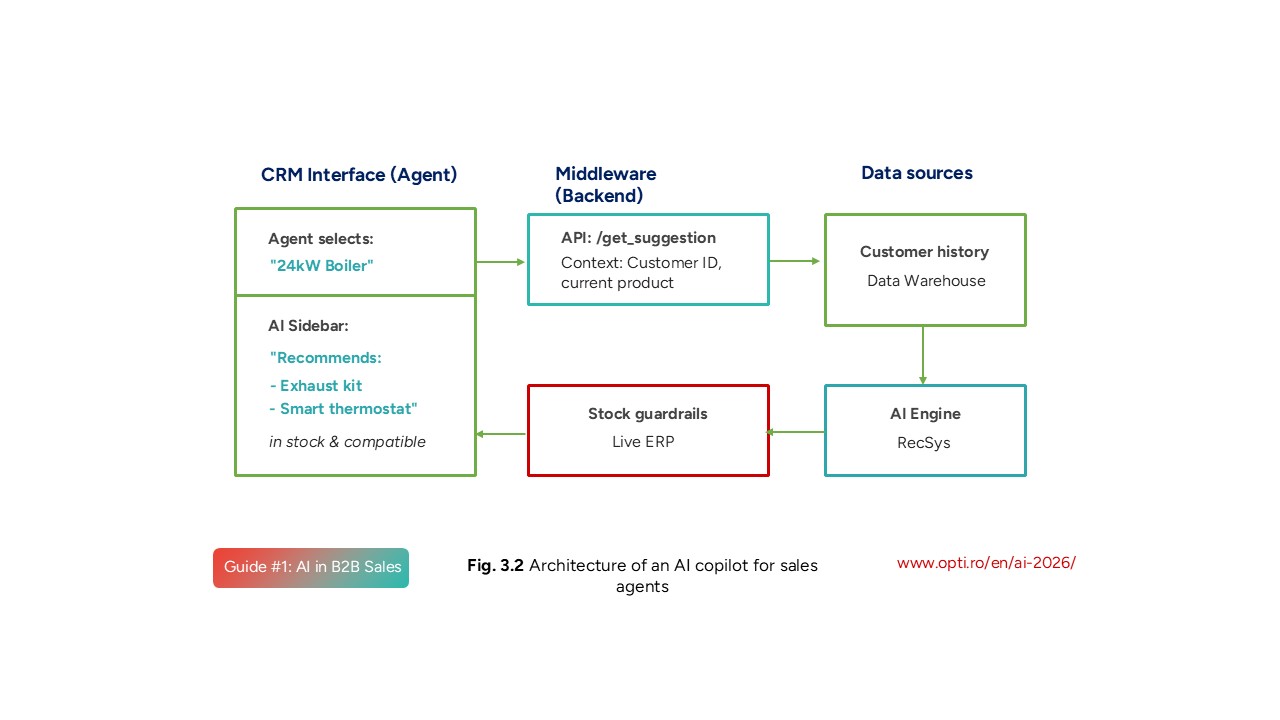

Unlike a simple AI that recommends a single product, an agent built on Composite AI can generate a complete solution, a Bill of Materials (BOM), required in any complex quoting process. The foundation is Deliverable 1 from the OPTI guide: the sales copilot:

Legend: Architecture of an AI copilot for sales agents. See in guide

A BOM example in practice:

- An interior designer uploads a PDF floor plan of a kitchen.

- They request all the furniture pieces needed, down to screws and handles.

- The Composite AI agent breaks down the request, uses GraphRAG (understanding spatial and logical relationships between parts, not just text search) to find thousands of compatible in-stock components.

- The AI agent validates the project through a constraints engine (e.g. do the doors fit the hinges?).

Today, a quoting specialist works 48 hours to manually produce a BOM, but advanced hybrid architecture (Composite AI) will generate a valid quote in just a few minutes, giving the distributor a massive competitive advantage.

5. Examples from OPTI's Experience

For distributors and manufacturers managing catalogues of tens or hundreds of thousands of products, the OPTI Software hybrid architecture translates into a clear execution pipeline.

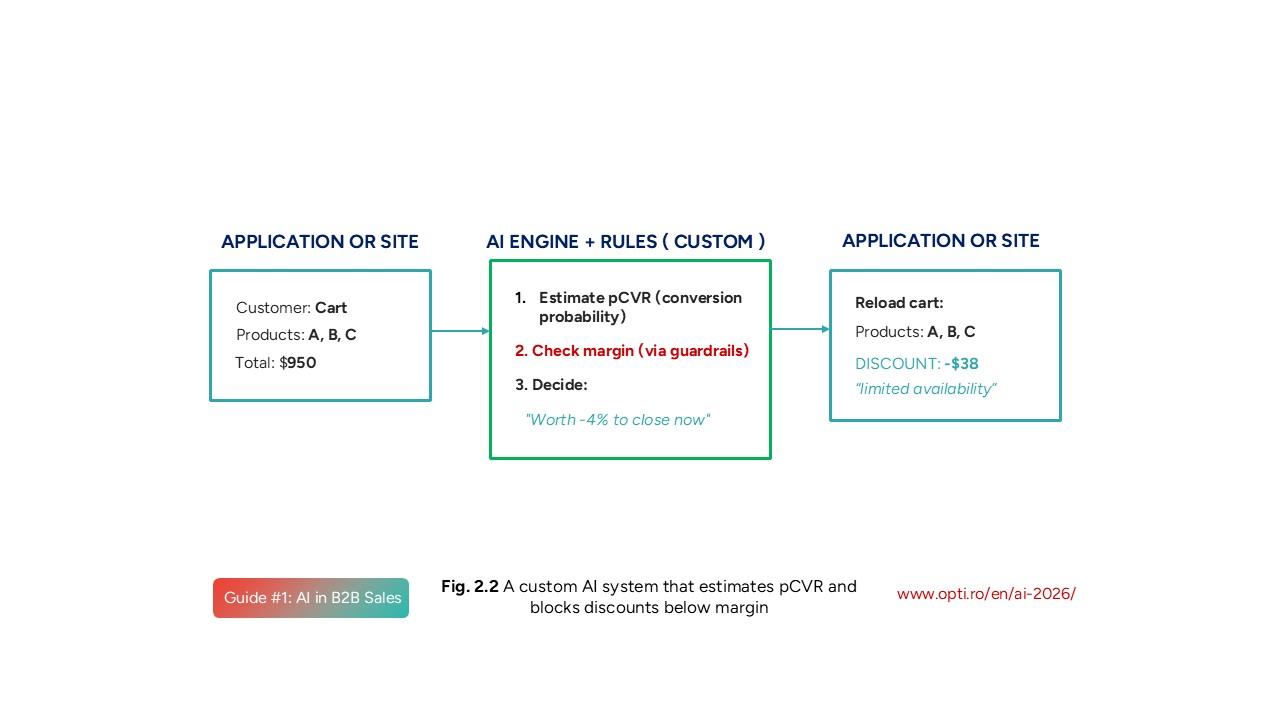

Legend: A custom AI system that estimates pCVR and blocks discounts below margin See in guide

Candidate Generation and Scoring (the neural component)

- Deep Learning algorithms in solutions such as Vertex AI Search for commerce from Google (the Cloud Native variant) analyse user behaviour and product catalogues.

- They understand semantic associations ("copper pipe" is correlated with "copper fittings", even when the exact words differ) and generate a conversion probability score (pCVR).

Guardrails: Business Rules (the symbolic component):

- The system receives the AI recommendations, but before displaying them to the customer, applies the data contract and safety rules.

- If AI suggests a product that is out of stock, has a negative commercial margin, or is technically incompatible with the main product (e.g. AMD processors with Intel motherboards), the deterministic (symbolic) rules will block or demote that recommendation.

Data Cleaning:

- No AI system can function without a pristine data layer. Deduplication, attribute normalisation, and standardisation of units of measure from the ERP are mandatory prerequisites.

- Neural algorithms cannot "guess" that "Rob. buc. Cr." means "Chrome kitchen tap" without a symbolic dictionary and a data cleaning process.

Ready to Adopt AI for Your Company?

Download the free OPTI Guide #1: Intelligent Recommendations, Upsell and Rules (172 pages) and discover how to implement Silicon Valley technology solutions, adapted for the realities of the B2B market.

Download free: OPTI Guide #1 - Intelligent Recommendations, Upsell and Rules (172 pages)

![[UPDATE] The Romanian startup awards will be presented on March 12. Mr. Benny won 3rd place at the Romania Startup Awards 2026. [UPDATE] The Romanian startup awards will be presented on March 12. Mr. Benny won 3rd place at the Romania Startup Awards 2026.](/images/new-post/small_long-romania-startup-awards-2026-mrbenny.jpg)